In the previous post we learned about the mediant, which is the “wrong” way to add rationals : . While this operation is not well defined, and depends on the presentation of the rational numbers and not just their values, we saw that it still has interesting properties, and in particular

where

and

. Moreover, if we start with

and add their mediants to get

, and then repeat the process by adding the mediants of each subsequent pair

, and continue repeating it again and again, then we get all of the rationals in

.

In the previous post we tried to keep the math elementary as possible. In this post we will go a little bit more advanced and take a look at this operation from several mathematical perspectives.

Some linear algebra

It is almost always good when linear algebra appears when trying to study a subject. It is generally simple to handle, and there are many useful results in linear algebra which are just waiting to be used, and this case of mediants is not different.

In the previous post there were already some clues that there is some linear algebra in the background. The first one was the simple inequality of rational numbers , where for positive

is equivalent to

. This expression is non other than the determinant of the matrix

being negative. This determinant also appeared later on as the area of parallelograms. However, the place that is best to start with, is our process of finding all the rationals in

using mediant computations.

Instead of looking at the points separately, let’s look at the segments, because in the end each mediant is an average of the endpoints of a segment. Furthermore, as we saw in the previous post, looking at the rationals is a bit misleading, and we should actually think about them as integer vectors with two coordinates. So all in all a segment should be represented by 2 vectors with 2 entries each, or better yet – a matrix.

The matrix corresponding to the first segment is simply the matrix

. This segment splits to

and

which correspond to

and

. Next, we will have the matrices

,

,

and

etc.

Each time we split a segment to two, the corresponding matrix “splits” to two, each one contains one of the columns of the original matrix (one of the end points of the original segment) and the second is the sum of the two columns of the original matrix (the mediant!). This behavior is best described using simple matrix multiplication:

.

If we set and

, then multiplying by

(respectively

) from the right is the same as doing our mediant step to split a segment and go down to the left part (respectively right part). If we also set

to be our starting matrix, then the matrices we are getting in our process are exactly those of the form

where all the

. In other words, we have

where

is in the semigroup generated by

(like a group, but do not need invertibles).

Note that and that

, so that each matrix appearing in our process has determinant

(this is exactly the same result of the invariant areas of parallelogram that we saw in the previous post, only there we used the cut and glue method). Also, since the entries of

and

are nonnegative, so are the entries of their product. We can now ask whether the opposite is true – if a matrix has nonnegative entries and determinant

, can it be written as

? This is almost true, however there is a very simple sounter example –

. Fortunately we are not that far off since

. This is not only some algebraic problem that we need to solve – it actually has a nice geometric interpretation. Instead of starting with

and

, we start with

and

. Using this presentation for infinity, and doing our mediant process, you can go over the proof from the previous post and show that you can get any nonnegative rational. However, since we now have the langauge of linear algebra, we can do much more.

Theorem: Let be an integer valued matrix with nonnegative entries and

. Then we can write it as

. In particular, one of the segments in the mediant process (starting at

) will be

.

Proof: We prove by induction on . The minimum of this sum for a determinant

matrix is 2, and the only matrix with a sum equals to 2 is

, in which case the theorem is trivial.

If , we claim that either the entries in the left column of

are larger than the right column (namely

) or the right columns is larger than the left. Indeed, if we assume by negation that

and

, then since we are dealing with integers, we have that

. Under the assumption that

and

, it follows that

. Completing it to a determinant

matrix also implies that

so we have the matrix

. Similarly if

and

, then

which is not possible at all.

Saying that the left column is larger than the right, so that exactly means that

has only nonnegative entries. Moreover, it still has determinant

and the sum of the entries is

(in an invertible matrix we can’t have

). We can now use the induction step to finish the proof. A similar argument works if the right column is smaller than the left, but this time multiply by

.

This was a very simple proof using induction on the size of the entries, but under the current formulation it is not enough to prove that every nonnegative rational number can appear as one of the endpoints of the segments in our process. For that we need to understand what are the nonnegative integer value matrices with determinant . Luckily for us, we understand them quite well.

Lemma: Let be nonnegative coprime integers. Then there are integers

such that

. Moreover, any other solution

for

satisfy

for some

.

Before we prove the theorem, we have an immediate corollary:

Corollary: If are coprime, then

appears in the mediant process starting with

In particular, any nonnegative rational number appears in the process as an edge point.

Proof (corollary): Apply the lemma for and find

such that

. If we take

large enough, then

has nonnegative entries. We can now apply the last theorem to the matrix

to get that

is a segment appearing in our mediant process which completes the proof.

Proof (lemma): It is well known basic result that each coprime integers have some integers

such that

. If

is any other such solution, then subtracting the equations gives us

. Since

we get that

. Since

it follows that

, so we can write

for some integer

. Similarly, we get that

for some integer

and injecting them into our equation gives

. Thus

which is what we wanted to prove.

We now reproved the proof from the previous post, using some simple linear algebra and got a much stronger result. Moreover, we saw that the segments appearing in our mediant process have a nice structure – it is basically an orbit of the semigroup generated by and

. Next, let us combine linear algebra with some combinatorics.

Growing trees

Once we start to look on segments instead of rational numbers, some results become clearer to see. The first one relies on the simple fact that each segment splits to exactly two nonoverlapping segments (except the edges). If we connect each segment to its two parts we get a directed binary tree:

Another way to think of this tree, is instead of taking the segments as vertices, to take the mediants of their end points (so that the root is , its children are

and

etc). This tree is called the Stern-Brocot tree.

The important property of a tree, is that if we walk along two branches without backtracking, then it is enough to have one place where the branches diverge to conclude that we end up in different places. For example, if we go Right, Right, Left we end at the segment , while going Right, Left we end up at

.

Translating the example above to our linear algebra setting, this means that the matrices and

are different, and therefore

. More generally, this tree structure means that if we have two words over

, say

for

describing two different pathes, then

. This type of structure on a semigroup has a name and is called a free semigroup. More or less it says that elements corresponding to two words are the same only for trivial reasons (the words are the same). Just proving this results without this tree structure is not trivial, not to mention that even knowing to ask this question is not automatic in any way.

On circles and lines

To study our ill-defined mediant operation, we first went up from rationals on the real line to integer vectors on the plane and then to integer matrices, and in the end we always try to see what the results that we get imply on our original problem with rational numbers. We now try to formalize this problem a bit.

Suppose that we want to go Right, Right, Left and then look on the rational number on the right endpoint. First, considering the segments, we need to look at the matrix . Second, the right endpoint is the right column, so we are looking at

. Finally we divide the first entry by the second to get

.

We acted with on the vector

and took the “shadow” of the resulting vector. Can we instead act on the “shadow” of the first vector, namely have something like

? More generally, for a

matrix

such that

we want a new operation defined by

. When we took the mediant of two rationals it was not well defined, however somehow this matrix operation is well defined, and can be written as

.

This is a well known operation called the Mobius transformation. It is defined in general for any complex matrix and acts on complex numbers.

The Mobius transformations have all sorts of nice properties. One of the most important is:

Theorem: The Mobius transformations are bijective maps on the complex plane. Moreover, if is a line or a circle in the complex plane

, and

is any Mobius transformation, then

is again a line or a circle.

There is an extra nice property that Mobius transformations coming from real matrices satisfy. In our case the matrices are integer valued, so in particular they are real valued. They also all have determinant , however, this is only because we multiply by

in the beginning which has determinant

. We can just as well multiply by the identity matrix, and the reason that we chose

instead, is because we use to think of

as being on the left of

being on the left of

. With this small technicality out of the way, we may assume that out matrices have positive determinant, so we can applay the following.

Claim: If is a real valued matrix with positive determinant, then the real axis and the upper half plane (namely

) are invariant under the corresponding Mobius transformation.

Both of the theorem and claim above are standard results in complex analysis. If you want to try to prove them yourself, you should first try to show that each matrix can be written as products of an upper triangular matrix with ones on the diagonal, a diagonal matrix, and the matrix

, and see what is the corresponding Mobius transformation for each such matrix.

In this new world, there is a nice interpretation of our mediant process. First, since we deal with real valued matrices, we can restrict our attention to the upper half plane and the real axis. Our original process began with and

. These are of course on the real axis, but other than that no line on the upper half plane passes through them. However, there are many circles passing through each of them, and each of these circles are tangent to the real axis. Of all of these circles, there are exactly two which pass through

and

respectively, and are tangent to each other as well. These are the circles with radius

around

and

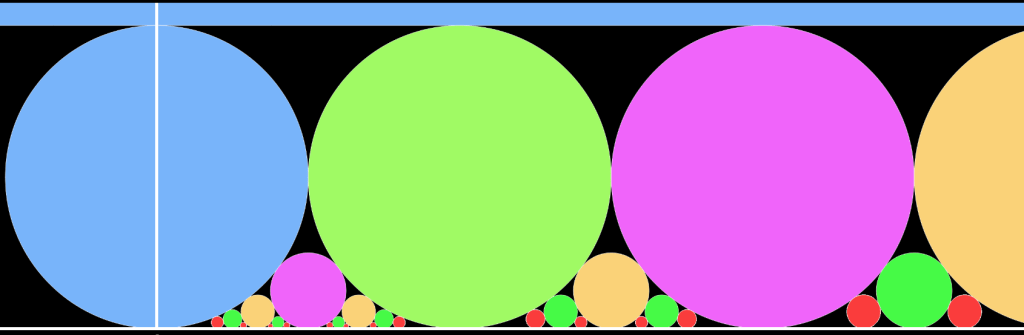

respectively (blue circles in the image below).

We now have 3 lines\circles – the two circles from above and the real axis – every two of which are tangent to each other. Is there another circle which is tangent to all three of them? There is one and it touches the real axis at exactly which is the mediant of

and

(green circle below). We can continue like this, each time take two circles and the real line and find another circle which is tangent to all of them, and then we will get this picture:

In other words, the points where the circles touch the real axis are constructed exactly using the mediant process! So while the average that the mediant “define” is not exactly the middle like the arithmetic mean, it is the “middle” where these new circle touch the real axis.

We can go even further than that. Initially, we looked for another circle which is tangent to both of the big blue circles above and the real line. But in the world of Mobius transformations circles and lines are the same. While we don’t have another circle which is tangent to all of these, there is another line – the line . This line is “tangent” to the real line at the point at infinity. Starting at this “infinity”

point and at

we instead see the following picture:

This way we get all of the positive rational numbers and not just in the segment . Of course we can reflect these to get the negative rational numbers as well.

Finally, in the picture above we have a lot of circles and exactly two lines ( and

). However, for the Mobius transformations all the lines and circles are the same, and we can actually convert the real line to a circle and the upper half plane to the inner part of that circle. Using this transformation (+the reflection from above), brings us to this picture in the Poincare Disk:

While you need some more knowledge in complex analysis and a bit of dynamics to actually appreciate the mathematics itself behind these images, it is nonetheless nice that we can use mediants to create these interesting pictures.

You can view the code for creating the images above (or just create more images like them) here: https://openprocessing.org/sketch/1014060

And even more …

While in the previous post we saw how the mediant can be studied using elementary mathematics, here we saw that it appears in all sorts of interesting places in the more advanced parts of mathematics, whether it is in algebra, combinatorics or complex analysis. These mediants and in particular our mediant process appears in other places, and in particular probably its most natural location is in continued fractions and the dynamics it defines, and it becomes even more interesting when tried to generalize to other places, but I will leave it for another time.