We all know pancakes and how delicious they can be if prepared properly, and it is only natural wanting to share it with your closest friend. However, cutting a pancake exactly in half, one for you and one for your friend, is not trivial matter. It is even less trivial when you understand that your friend also has a favorite pancake which he wants to share as well, so now we need to double our cutting process. In this post we will show that with a bit of mathematics, not only you can cut both pancakes exactly in half, but you can do it simultaneously with a single straight line.

Continue reading-

Recent Posts

Tags

- Borsuk-Ulam

- Cayley-Hamilton Theorem

- characters

- Chinese remainder theorem

- Compression

- Diophantine approximation

- Dirichlet's theorem

- Dirichlet's approximation theorem

- Dirichlet's unit theorem

- Domino

- Dynamics

- eigenvalues

- Entropy

- Euclid

- Fourier transform

- gaussian integers

- Generic Matrix

- Geometry

- Geometry of numbers

- Ham sandwich

- Homogeneous spaces

- Hyperbolic space

- intermediate value theorem

- isoperimetric inequality

- Klein group

- lattice

- Lattices

- Linearity testing

- mediant

- Minkowski's Theorem

- mobius

- Number Theory

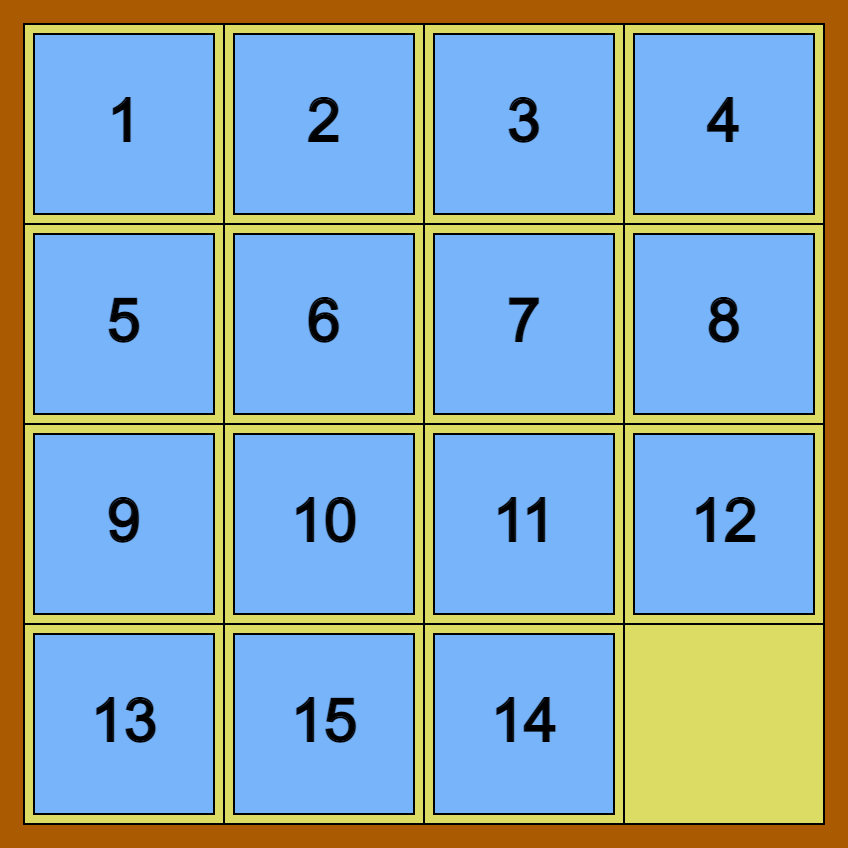

- Peg Solitaire

- Pell's equations

- planar geometry

- poinare disk

- prime numbers

- Pythagoras

- radar

- random walk

- self similar sets

- shortest vector problem

- Sierpinski carpet

- Sierpinski triangle

- SL_2(Z)

- stochastic matrices

- Weak Law of Large Numbers

Archives

-