Taking a final exam in a course is not really a celebration cause for most students, but it is just not as fun to be on the other side as exam checkers. It can easily take several days to grade the answers for the same question for hundreds of students. The exam checkers would probably tell you that by just reading a few lines from the answer they know if it is going to be (mostly) correct or not, however they still need to read all of it just to be sure. These couple of lines can still be misleading – a good start can still lead to a complete failure, and a bad start might eventually, somehow, get most of the details right.

So maybe when checking exams we cannot really do it, but are there problems where reading only few lines from a proof is enough to determine its correctness? As it turns out, certain problems have innate structures that makes them more “stable” than others – if you write a “wrong” solution for them, then you can see it almost everywhere, so reading just a few lines will be enough.

One of the best examples for this kind of problem is the linearity testing. Suppose that someone claims that a function is linear, and to prove it they show that

for all

. What Blum-Luby-Rubinfeld (BLR) showed that in a sense it is enough to know that one of this conditions (chosen randomly) is true to conclude that all of them are true with high probability. In this post we will try to see what is so important about linearity, and which structures hides in this problem, to make this miracle happen.

Probabilistic proofs

As it is usually the case, our story starts with the famous linearity testers – Alice and Bob. Bob has a function , which he claims to be linear, namely

for any two vectors

. Alice, who needs some convincing, chooses two such vectors

randomly, and checks that

. If true, she will accept the function as linear and otherwise reject it.

Of course, if , then

is not a linear function so Alice was right to reject it. However,

might still be not linear, even if

, so Alice might be wrong claiming that it is. The question is what is the probability that she is wrong in this case.

Suppose Bob actually computed a linear function , but got a few mistakes along the way, so that the new function

is not linear, but very “close” to being linear. In this case, the chances that Alice chooses one of these corrupted values is very small, so even though

is not linear, with high probability Alice will say that it is. This is a problem that we can’t really overcome, and in general it doesn’t matter too much in practice. Alice will probably not use all of the values of the function, so there is a good chance that she will not even see the corrupted values. Further more, if

is close enough to a linear function, Alice might be able to fix its problematic values.

The question becomes interesting when Bob doesn’t really start from a linear function, and his function is far from any linear function (for example, this can happen if Bob didn’t really study to the test, and just chose the function randomly). If this is the case, what is the probability that Alice is going to reject? For example, if for every linear function

, Bob’s function

has at least 5% of its values different than

, then can we expect Alice to have more or less at least 5% chance of rejecting the function?

This miracle property is called the Soundness of the problem. Let’s give a more formal definition.

Definition: Given two functions

, we define their Hadamard distance by

. In other words,

measure the chance of choosing a vector on which

and

disagree, and in particular

if and only if

.

For a set

of functions, we write

.

With this definition, we want to show that following:

Theorem: Let

the set of linear functions. Then for any

, the probability

is at least

.

In other words, if is far from being a linear function, then there is a good chance that Alice will notice. Note that Alice can always repeat this protocol to increase her chances to reject the function in case that it is not a linear function. Even if she is wrong with probability

, she can run the protocol 100 times to lower her chance of being wrong to less than

(and 100 checks is usually much less than the full

checks).

From simple to complicated

Many times, when facing a new problem, one of the biggest road blocks is where to start analyzing it. One of the approaches that I have found useful over the years, is trying to start with a more simplified version to get some intuition and after that add the structure back until returning to the original problem. This can be done, for example, by looking at a specific simple case of the problem, or, as I will do here, simplify the language that we use to study it.

Set Theory

In mathematics, one of the most simplest of languages is that of set theory. In this world of sets, and in particular of finite sets, the first question we should try to answer is what is the size of the sets. Our problem contains two sets – the set of all functions, and the linear functions

,

.

Let us start with studying and then move to

.

In general, determining the size of sets is not an easy task. However, in our case the set has a very unique structure. It is the set of all functions from

to

. This means that its size is

, so if we know the sizes of both of these source and target sets, we can compute the size of

. Note that

in itself is also a set of functions (from

to

) so we can use the same trick here as well, and conclude that:

Algebra

After set theory, the next language we should use is the one of linear algebra. While it can be very technical at times, it is quite easy to work with (and there is a reason that it is usually studied at the first semester). Our three sets from above, and

are actually vector spaces over

. When you start seeing vector spaces, you should always look for their dimensions as well, which in a sense, together with the field, determine the vector space completely. In particular, over the field with two elements, the dimension is just

, so we get that:

Symmetry and Geometry

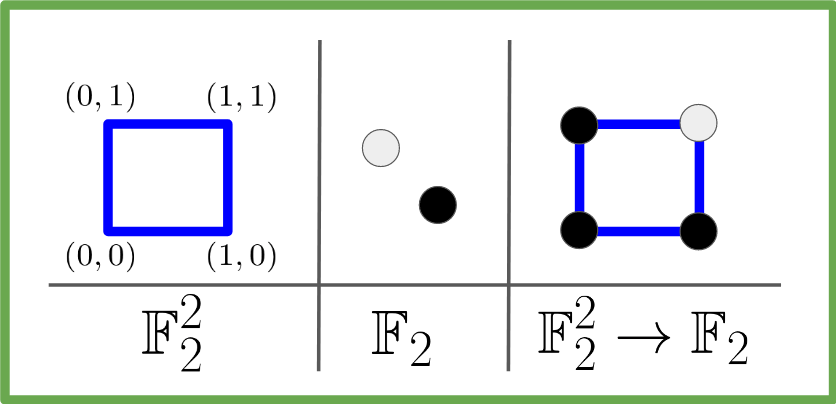

Next in our journey, we will look for all sorts of symmetries that our objects have. To get some intuition and be able to visualize the objects, lets restrict our problem to the case. In this world, our first set is just

which are just the vertices of the unit square. We can visualize the target set

as the black and white colors, so that a function

is simply a colored square:

The importance of this visualization is that these sets have a lot of interesting natural symmetries like the rotations or reflections of the square, and these are closely connected to our objects.

Since our sets are actually vector spaces, they come with the natural addition operations which act on them. For example, in our black\white visualization of the set , adding

does nothing, while adding

flips between the colors. The addition in

in the geometric presentation, acts on the vertices. Here too adding

does nothing, but for example adding

switches between

and

, and in our square presentation this is simply the left to right reflection:

Similarly, adding is the up-down reflection, while adding

is the rotation of 180 degrees.

Once we have these symmetries on (switch colors) and on

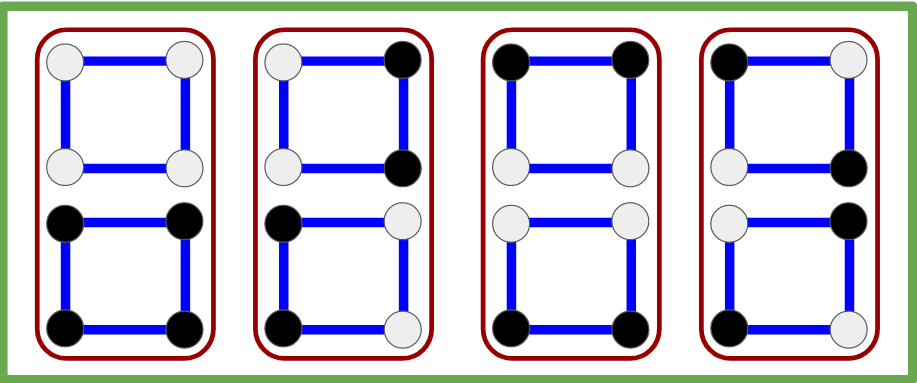

(reflections and rotation), we can apply them to our colored squares in

to get, for example, this:

This is already a lot of interesting information, and we haven’t even started to look at the linear functions. The hope is that we can use each of these worlds of set theory, algebra and symmetry on these linear functions and combine it with what we saw on this larger set of all binary functions to produce some interesting results.

Describing the linear functions

Set theory of Linear functions

The set of linear function is defined by the linearity condition, namely

.

Unlike the set of all functions, we cannot easily use this presentation to find the size of this set. However, this definition of linear function is not random, and it is rooted deeply within the algebraic structures of all of our sets. In particular, one of the first results that we learn on such functions, is that linear functions are determined completely by their values on a given basis of the vector space. Alternatively, every function from a basis can be extended uniquely to a linear function. Putting it more formally, if is a basis, then the restriction

to this basis, which is a map

is a one to one and onto function. This new set is a “set of all functions”, so we can compute its size

.

Algebra of Linear functions

The size of equals exactly to the dimension of

, which immediately raises the question whether it is a basis for

, which is nice to know in the land of algebra. However, we can quickly discard this idea since the set of linear functions is closed under addition, or in other words – it is a subspace (while in a sense a basis is a set “as far away as possible” from being close under addition). This might be disappointing a bit, but the property of being a subspace comes with its own advantages, and this coincidence of having the same size as a basis might (and will!) come handy in the future.

The symmetries of Linear functions

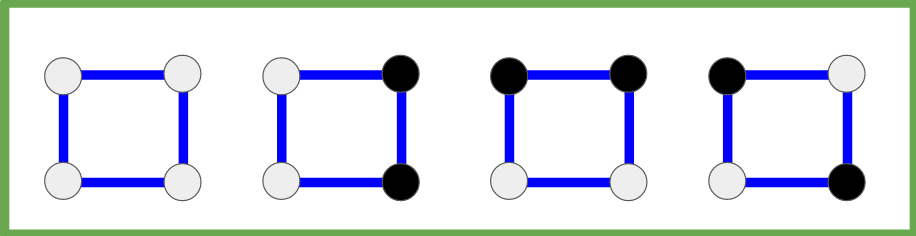

Returning to our case, we know that there are

linear functions, and we can find and visualize them as colored squares (white=0, black=1):

What happens if we apply our reflections and rotation on these linear functions? No matter what we do to the full white square (the trivial function) it remains in place. However, if we apply them to the second colored square, the up-to-down reflection keeps it in place, but the left-to-right reflection and the 180 degrees rotation do not. But not all is lost – these two transformations are the same as simply switching the colors (which is the symmetry which comes from the addition in !). The same happens with the other two linear functions. So in a sense, we should probably look at the pairs of functions:

In mathematics, when we have some action on a set, as above, and an element doesn’t change under this action (like the black\white square), we say that it is invariant under this action. The nontrivial linear functions are not invariant, but they are really close – they are invariant up to the color change. These “almost invariant” elements tend to appear in many places in mathematics, from simple examples like these rotating squares to the most advanced parts of mathematics.

If you are reading this post, then there is a good chance you already know some of these “almost invariant” objects really well. Indeed, maybe the best example for this behavior is when a linear transformation acts on a vector space

. The simplest behavior we can hope for is to find

such that

, since then, for example, we can immediately compute

. However, since this is a very restrictive condition, we look for a more general definition where we only require that

for some constant

, or in other words – we look for eigenvectors. These “almost invariant” vectors are simple enough so that we could work with them, with a condition not too restrictive so they would actually exist, and as we shall soon see, this is exactly what we have in our problem.

The eigenvectos

In our problem, the posible eigenvalues are , so eignevectors are either sent to

, or are completely invariant, which is not good enough to describe our linear functions. The almost invariance up to the color switch means that

is either sent to itself, or to the function

, so we add the scalar rather than multiply by it. Luckily for us, this is not too much of a problem, since we can use the standard trick to move from addition to multiplication – apply the exponent function.

Instead of working with , we think of these two elements as

and

respectively, to get the set

. Converting our addition to multiplication, our sets become

,

both of which are contained in the -vector space

of dimension . At least in the

case, our arguments from above show that

are eigenvectors for all of our square symmetries (reflections and rotation). For the more general case:

Definition: For

, define the linear transformation

by

.

Claim: For any

, and a multiplicative linear function

we have that

– namely

is an eigenvector with eigenvalue

.

Proof: Follows immediately from

.

Now we are at a point where we have a set consisting of only eigenvectos, and has exactly the size of a basis, which begs the question – is it a basis (over

), and therefore make our symmetries diagonalizable?

Orthogonality

To prove that is a basis, it is enough to show that it is an independent set. We can show it directly for any fixed

by doing some manual computations, but this looks like too much work. Instead, lets try to extract some more information from our new visualization:

We already mentioned that these colored squares are almost invariant, but there is actually an even simpler property that we can see. The number of black and white vertices is always even, and while in the trivial function all the vertices are white, in the rest the number of black vertices equals to the number of white vertices. Now, after we moved to the values instead of

, this is equivalent to having the sum of the values equal zero – exactly two

values and two

values.

What can we say about such vectors? For we are in a

-dimensional space, which is a bit harder to visualize, so lets go even smaller to

, in which case our two linear functions are the trivial function, which has values

and the only non trivial linear function which has values

. In the plane they look like this:

The two vectors representing the functions have the same length, but more importantly they are orthogonal to each other. If we can show that in general the linear functions form an orthogonal set, then we automatically get that they are independent, and since it is a set of the size of the dimension of our vector space, they must form a basis.

As we shall see soon, “moving” from one linear function to another

equals their ratio

which is (multiplicative) linear in itself. Measuring the cosine of the angle is going to be the sum of the values, so unless

we expect to get 0, so that the functions must be orthogonal.

Orthogonality formalization

To prove this claim, we start with the definition of our inner product.

Definition: For two functions

we define their inner product as

.

With this definition in mind, lets see what we can say about functions from which have values in

and in particular about linear functions.

The first immediate result is that , so they are all on the unit sphere. Next, by definition

. If both

, then so is their product

and the same hold for multiplicative linear functions in

. In particular, this product is trivial

if and only if

. You can either try to prove it directly, or note that this is exactly equivalent to

and

being vector spaces (close to addition and the field is

).

The inner product is simply

– the summation over all the values of

up to out normalization. If

, then it is simply 1. However, if

is multiplicative linear, it has exactly two values

, and each one appears exactly half of the times (this is a group homomorphism), so that

. Putting all of this together we get that:

Claim: Let

be any two functions. Then

1.

, and

2. If both functions are multiplicative linear, then

Corollary: The set of multiplicative linear functions

forms an orthonormal set in the set of all functions

and therefore forms a basis.

Back to linearity testing

Now that we have all this new information about binary functions and linear functions we can return to the original problem of linear testing.

In the original problem, we wanted to show that if a binary function is far from a linear (multiplicative) function in the Hadamard distance, then with high probability when choosing

we will get that

, and then Alice will reject the function. Both the distance and the condition are essentially described using the standard basis. Defining

,

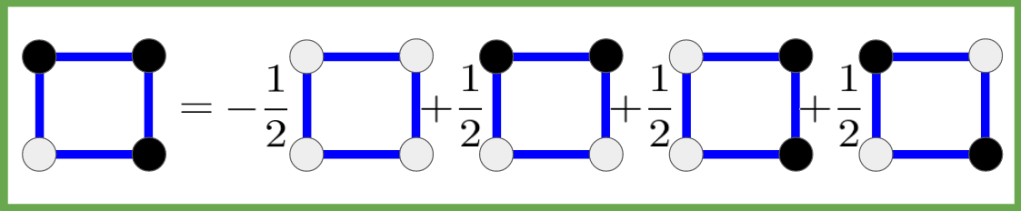

we think of as the standard basis and we can write

(where

.

Combination of the standard basis’ elements where 1=white, 0=green and -1=black.

Note that this basis is almost orthonormal – its elements are perpendicular to one another, and all have length . Now, the Hadamard distance basically ask how many of the coefficients are different in this presentation. Or more formally, if

with

, then

.

Similarly in the linearity condition, is “defined” using this basis, since we look at the coefficient of and

.

However, we just saw that there is another basis, which consists of linear functions, so we can write our function as a combination of these vectors:

Lets denote these linear functions by , so we can write

. If the function

was a linear function, then the coefficients would all be zero, except one which will be

. Using the orthonormality to our advantage, we also get that for any binary function

.

It follows that if the function is not linear, then all of the coefficients would be in

. What can we say about the distance of the function from some linear function? While the distance was measured using the Hadamard distance, any orthogonal basis is just as good, so

.

In other words, saying that the a function is far from all of the linear functions, is exactly saying that the are far from

, or more formally

.

We already see that in this new basis our distance function has a much simpler interpretation, and the main difficulty is to move from one base to the other, though this becomes simple if we can describe our expressions using inner products.

What about the probability for Alice to reject the function? If we can somehow rewrite it using the language of the inner product, we could move to this basis as well, and in this basis all the functions are linear, so it would be a much easier place to work in. And indeed we can rewrite it in the new basis and get that Alice will reject the function with probability at least . For readability, I will put the proof in a new section, though it is very similar to what we saw here, but with extra details. The details themselves are less important than the idea of moving from a basis which is easier to describe the problem and the protocol to a basis which is easier to work with (and I encourage you to try prove it by yourselves).

The linearity condition

We would like to count how many pairs will lead Alice to reject the function. For a single pair, it is not hard to see that

,

so we want to sum over these expressions. Then the probability to reject will be

The term in the sum looks similar to an inner product, but has three factors instead of two, and have a double summation instead of a simple summation. As it turns out, this is because we have in a sense two inner products. First, we can define , so that

.

We would much prefer to work with the coefficients of in the basis of linear functions, since there we can see clearly the distance from linear functions. As for

, since

(it is in a vector space over

), we can write it as

which is an expression that appears many times in mathematics called a convolution and is denoted by

. This convolution behaves like a function product in the sense that it is associative and distributive with respect to the function addition. But what is more important, is that the coefficient of

in the expansion of

is the product of the coefficient of

in the expansion of

times the coefficient in the expansion of

(try to prove it!). Putting all of this together, we get that

This means that the probability for Alice to reject is

which is exactly what we wanted to show.

In conclusion: So what did we do?

Trying to analyze our problem, we looked for simple ways to describe its objects. We started with considering them as sets, then added the addition to get algebraic objects, and then used these addition operations to find symmetries and actions on our sets. When applying the same process to the linear functions, we concluded that they are also orthonormal eigenvectors which led us to a new interesting basis.

Then the main trick was moving from one basis to the other. The standard basis is what we usually work with, and it is easy to describe the problem in this basis (for example, the distance is measured by simply counting the number of different coefficients). However, the second basis is far more related to the essence of the problem – it is exactly the set of linear functions. Both bases were orthonormal (up to a uniform normalization), so when the expressions were transformed to use inner products, it was very easy to move from one to the other. Once we moved all of our notation to this new basis, the problem became almost trivial.

Along the way we also encountered the convolution operation, which behaved nicely with respect to this base change. You might have seen this sort of behavior in another place – the Fourier transform. This is not surprising, since this base change is the Fourier transform, and this interesting result about the convolution is one of the main reasons that the Fourier transform is so important.

All of these different structures, the bases, and the conversion between them were miraculously combined together to show that if Bob didn’t really make an effort to create a linear function, then Alice would have a good chance to detect it. It does, however, rely on the fact that Bob is honest. If Alice asked Bob for the values of on

and

, Bob could reply any three values of the form

and

(instead of

and

) so that Alice will always accept. For this post, we would assume Bob’s honesty, but if we really wanted, we could change the protocol and have Bob commit to the answers before Alice asks for these values, but we will leave it for another post.

As a final thought, I would like to point out that this miracle combination happens a lot. After all, it is the basis for the Fourier transform that every engineer knows by heart, or its mathematical name – group representation. This in itself is a subject on its own, but once you understand its basics, results like those in this post become much more natural.