1. Introduction

In this post we consider an interesting mathematical process which can be easily simulated by a computer, and generates interesting pictures. A video version of this post can be seen in here (for now in Hebrew).

Let and

be the vertices of a triangle

and choose a random point

in the triangle. Next, choose one of the triangle vertices uniformly at random and move

half of the way towards it – we denote this point by

. We now repeat this step infinitely many times – given the point

we choose uniformly at random a vertex from

, move

half way towards that vertex and denote this point by

.

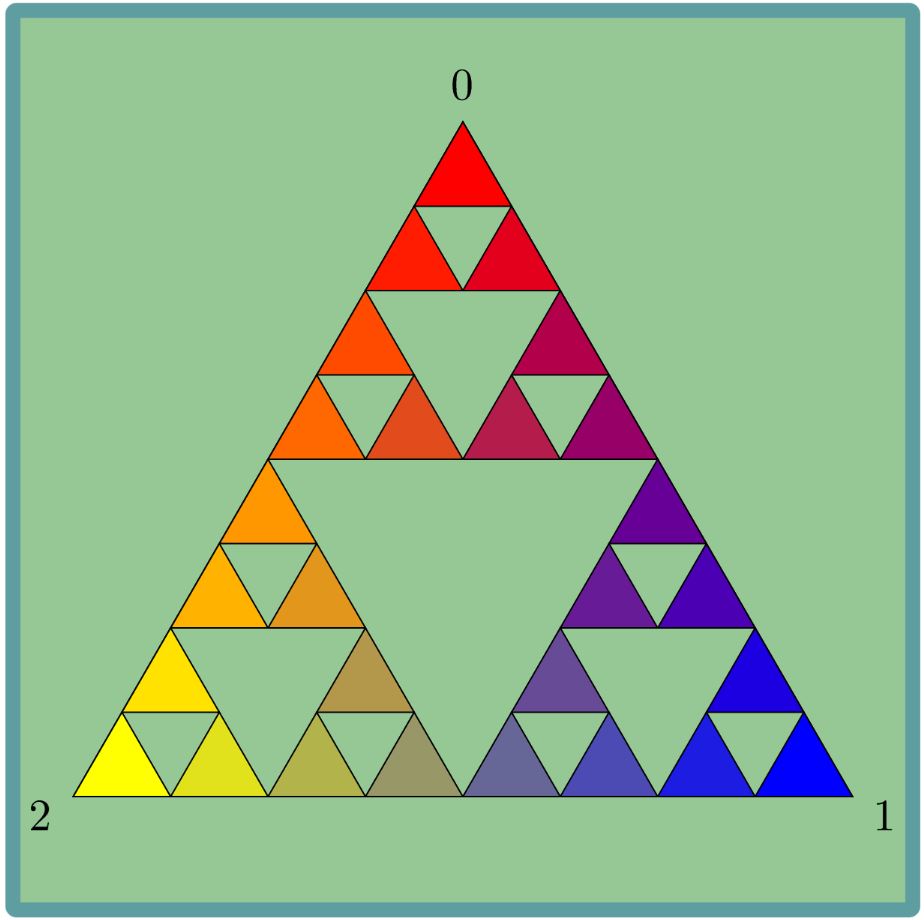

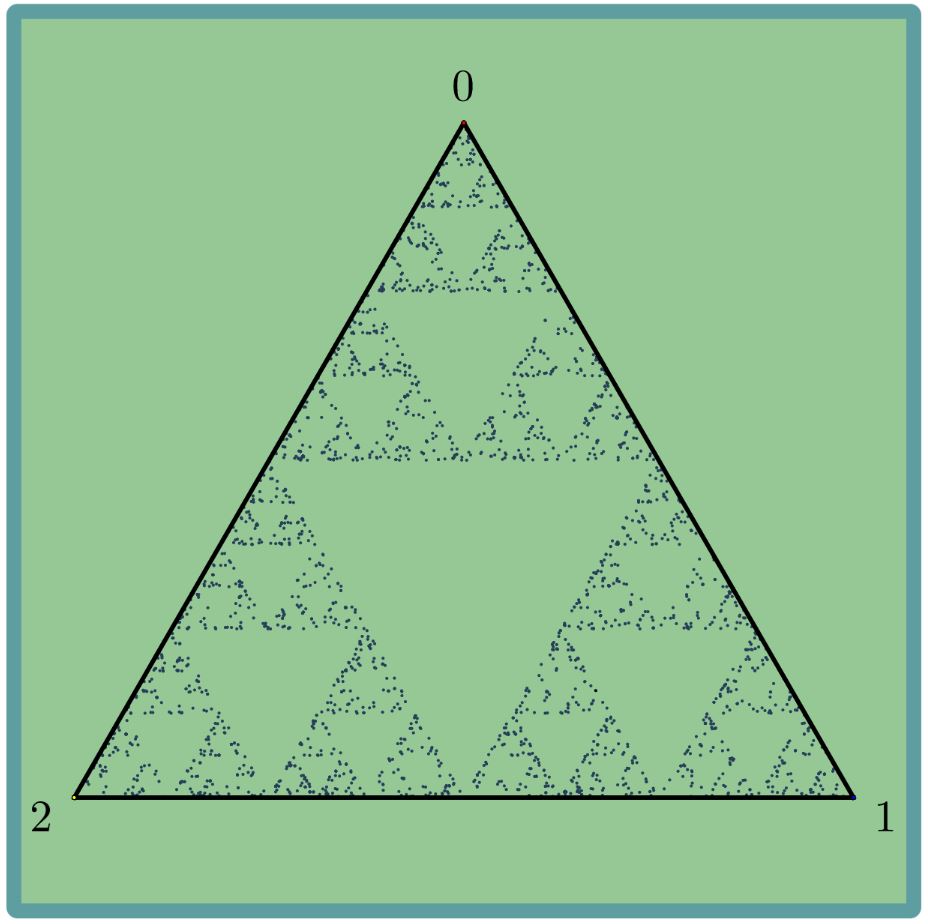

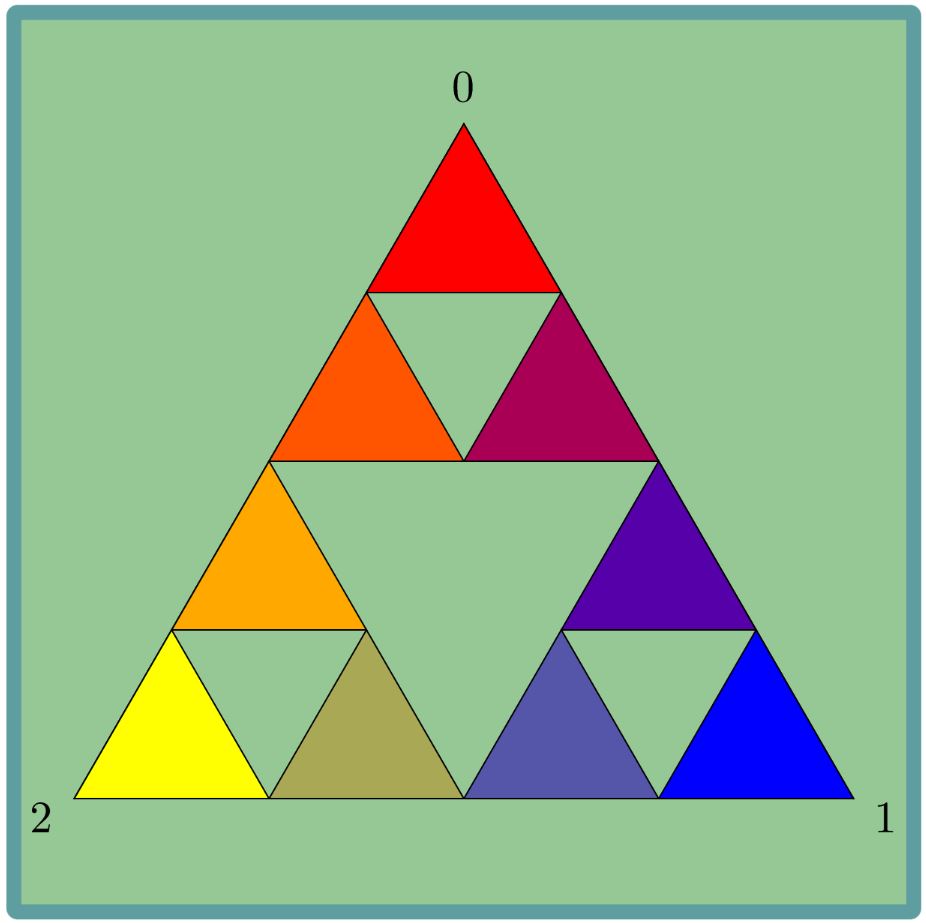

The “limit” of this process produces a very interesting picture, as can be seen below.

This process is called a random walk, since at each step we choose one of the vertices at random and continue according to this choice.

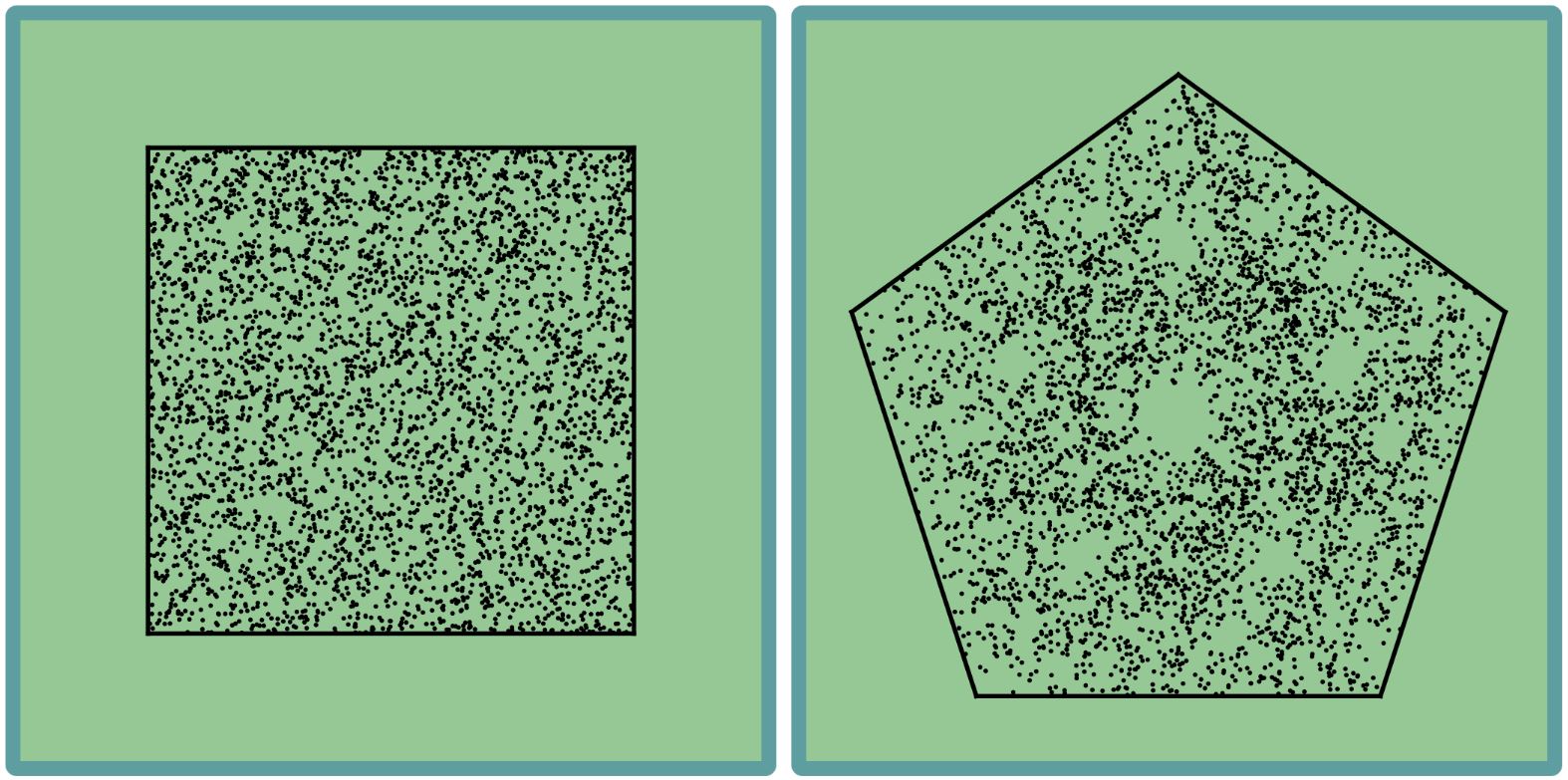

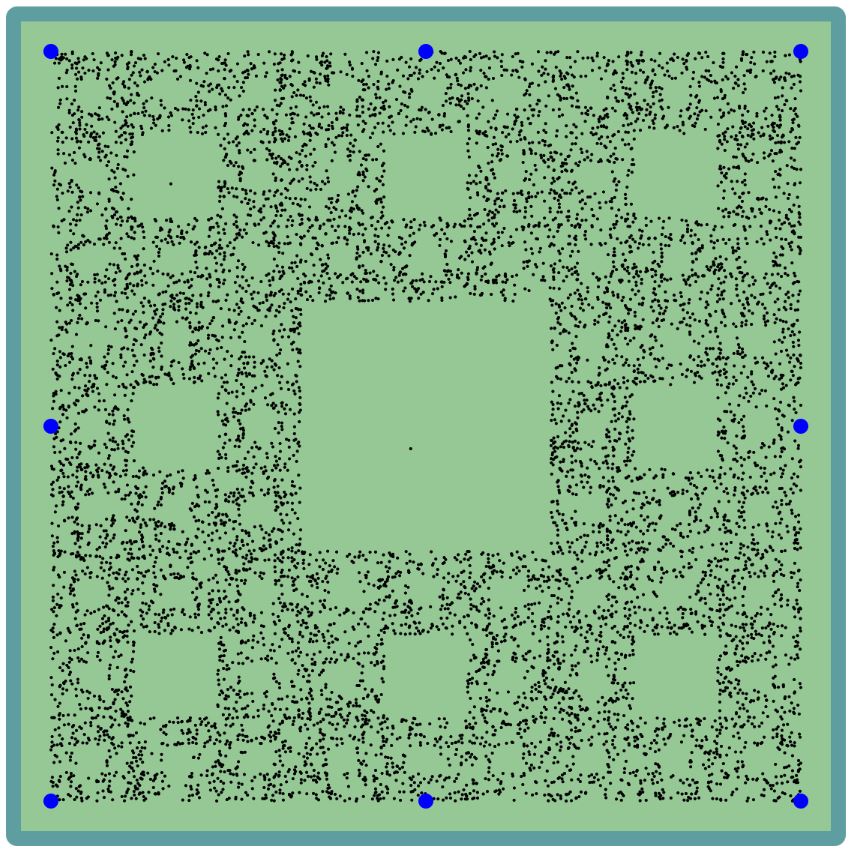

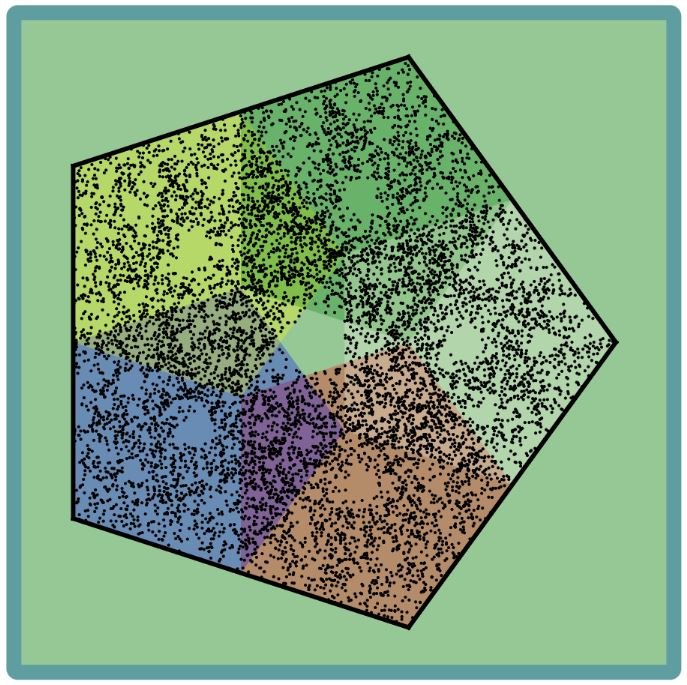

An interesting and natural question is if we can create more interesting pictures by starting with a different shape. For example, here are the result of the same process, but starting with a square and pentagon.

For the square, it seems like we just have a uniform distribution of points. For the pentagon the picture is not that simple – some places are empty (e.g. the center), while some are quite full.

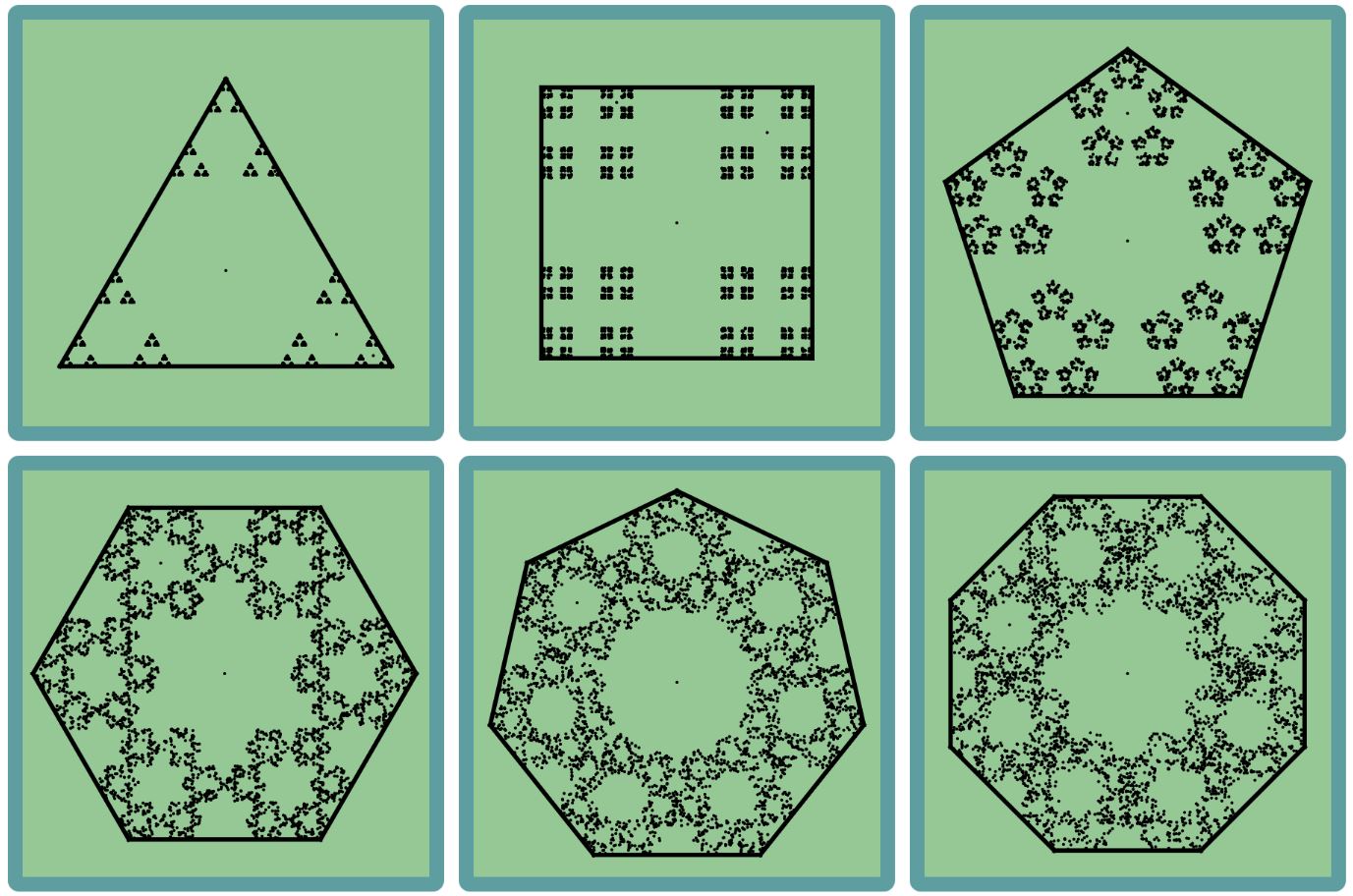

To better understand this process, instead of going half the way towards the vertices, we can use a different ratio. For example, if we move of the way, then we get the following images:

For and

vertices, we can clearly see small copies of the original polygon in the final image. The “type” of image is similar to what we have seen with the triangle and ratio

. But for

and

it becomes more complicated and it is harder to recognize the smaller copies of the polygons.

On the other hand, we can rearrange the vertices a little different, still with ratio 2/3, and get an interesting picture like in the triangle.

What we will try to do in this notes is to understand some of the mathematics behind these random walks and the final images that we get.

2. The Sierpinski Triangle

In this section we will concentrate on the process with ratio 1/2 when we start with the triangle. In this case the final image is called the Sierpinski triangle. In this section we will try to show that the only image possible (more or less) for this walk is the Sierpinski triangle

Before we start, recall that we have the following notation:

- The points we get in this process are denoted by

.

- Denote the three vertices of the triangle by

and

.

- Denote the choices of the vertices by

for

.

Note that moving towards a point with ratio

is exactly the function

.

In particular, in our case, the maps describing moving half the way towards the vertices are

.

2.1. Simplifying the problem

As with many difficult problems in mathematics, and in general, the first step should be to try and simplify them as best as possible. Once we solve a simple version of the problem, we can hope to (1) get some intuition about the general solution and (2) use the simple result and extend it to get the full result.

In our case, instead of considering infinitely many steps simultaneously, let us consider just the first step, namely what can we say about ? While

can be any point in the triangle, the point

is more restricted and in particular it depends on the first vertex that we choose. More specifically, we can play around with the choice of

, and see that

must be in one of the small colored triangles as in the picture:

Can we show it directly from the process definition? Yes, but before we prove that let us consider a very special case – suppose that we chose in the first step and

. In this case

is just a contraction of

– we divided in by

. This is actually what happens in general, but instead of contracting towards

we contract towards

. To see this, write

, namely start at

and move by

. Then

namely we start at and shrink the distance by a factor of 2.

This is of course true if we chose or

in the first step. Namely, we have just shown that:

Corollary:

The function is a contraction times

towards

.

Now the claim is clear. If we start with a triangle and shrink it by a factor of 2 towards one of its vertices, than we get a times 2 small triangle near the corresponding vertex.

We now know that the point is in one of the smaller triangles which we denote by

or

corresponding to the first vertex that we choose. What about the next point

? We can again try to see where it can fall, and do the same process as before, but let us keep our problem for now as simplified as possible and only ask in which colored triangle is it. Well, then we already did all the work above – the point

will be in the colored triangle corresponding to our second vertex choice. Because we only consider this simple problem, namely which colored triangle contains the point

, we can easily solve it – it is the triangle corresponding (only!) to the vertex choice in the

-th step.

2.2. A partial result

Now that we have a very simple observation, let us study it and try to prove some partial result which will hopefully lead to the proof of our original result.

Under our simplified problem, we can only ask for each which colored triangle contains the point

. After seeing

points, a natural question to ask is how many points does each triangle contain, or more formally, for

what is the size of the set

Running a simulation we can see that is more or less

for each

. Trying to quantify this “more or less” statement, we can increase

and see that

doesn’t really stay this closed to zero. However, if we divide by

, namely if we ask what is the ratio of the points in each triangle compared to the total number of points, we see that

do become closer and closer to zero as

grows to infinity. Note that this result is very natural if we believe that the limit is going to be the Sierpinski triangle. Indeed, the Sierpinski triangle is a union of three copies of itself (almost disjoint) corresponding to our three contractions:

This claim about the orbit of our point is of course not always true. If for some reason we keep choosing only the bottom left vertex, then all of the point (except maybe the first which we ignore) will be in (the yellow triangle). This means that

while

. But recall that in each step we choose a vertex uniformly at random, so the chances of such a choice is basically zero. Thus, the right formulation of what we mentioned above is that “with high probability

for each

”.

Remark:

Notice the difference between what we know and what we claim. We know that in each step we choose uniformly at random, namely with probability each triangle. What we claim is that if we use this process of choices for long enough and then look back and count how many times we chose each triangle, then it is very likely to see close to

of the choices for any triangle.

Let us give a proper formulation of this claim.

We begin by denoting the choice of indices for our vectors by . So for example, if we choose

and then

and then

again, then

,

and

. The claim that we proved above that the triangle containing

only depends on the choice of vertex in the

step can be written as follows:

Lemma:

For each we have that

.

We can now write as

This is a much better definition for , because the

are chosen independently while the

depend on all the previous choices. Luckily for us, we showed that for the question of whether

, we only need to know

. Finally, we can write our claim about

as:

Claim:

For every we have that

This claim should be understood as follows: there are many ways to choose our vertices in each step, but out side of a very small collection of bad choices, we are always spending of the time (with some

error) in each triangle. Moreover, as we take more and more steps the collection of bad choices becomes smaller and smaller compared to the total amount of choices (still for a fixed

).

The experienced probabilists will immediately see that there is another way to formulate this limit which will follow from the well known (and elementary!) weak law of large numbers. The main trick here to think of as summing up 1’s for each time

.

Let us fix and define a random variable

for each

such that

if

and

otherwise. In other words

checks whether we are in the

trianagle or not after

steps. Now, if we want to know how many times we have visited the

-th triangle, then we only need to sum up those

, namely

Thus, in the claim above we want to prove that

The term is an average of random variables, and furthermore these are independent and identically distributed (i.i.d) random variables, with

(the chance of choosing the

triangle) and

for each

. The “average” of a single such

, namely the expectation is

The weak law of large numbers say that if the are

and the “average” of a single

is

, then with high probability the average of all the

together is close to

.

Theorem (Weak Law of Large Numbers):

Let be i.i.d with expectation

, then for any

we have that

2.3. Improving the partial result

What we did so far is:

- We tried to simplify our problem by ignoring the “infinitely many points” and only consider the first two point.

- This led us to consider the 3 small contractions of the original triangle.

- Restricting our attention only to these triangles, we conjectured that the orbit of the point spend around

of its time in each triangle.

- We got a little bit into the details of this claim and added the “with high probability” part.

- Finally, we showed that our simplified conjecture follows from the Weak Law of Large Numbers.

Now we can try to repeat this proof process but with a little bit more information. Considering the first 3 points, we can show that the third point must be in one of nine very small triangles, depending on the first 2 choices of vertices:

As before, we can again ask how many times we visit each triangle, and a simulation will show that we spend around of the time in each triangle. In the previous step we had the triangles

, and

was in

if and only if

. Generalizing this notation, we can denote the smaller triangles in the picture about by

such that

if the two vertex choices before we get to

are

and

. Equivalently, we have the following generalization of out previous lemma.

Lemma:

For all we have that

.

Continuing to follow the previous step, let us fix and define random variables

such that

if

and

otherwise. Thus, counting how many of the

for

are in

we get the expression

. So saying that we spend around

of the time in each triangle can be formulated as

where as before

Unfortunately, we cannot use directly the Weak Law of Large Numbers, since the are dependent. Indeed, because

correspond to the

and

choices and

correspond to the

and

choices. Fortunately if

then

and

are independent, so we can hope for a generalization of the weak law.

Recall that we can “measure” how much two random variables are dependent by computing their covariance

and in particular is equivalent to

being independent.

In our case if

, and whatever

is, it is easily seen to be independent of

.

In order to generalize the Weak Law of Large Numbers, let us first recall its proof using Chebyshev’s inequality.

Theorem (Chebyshev’s inequality):

Let be a random variable with finite expectation

and variance

. Then for any

we have that:

This already looks similar to the weak law. Just put where the

are identically distributed with expectation

, to get that

also so that

If we can only show that goes to zero as

, then we are done. This follows from the fact that

In the case where the are independent, all of the

are zero, so that

. But even in general, if we can show that the sum

is not to big, then the limit is still zero. I will leave the rest of the proof to the reader, to show that the Weak Law of Large numbers still holds for our two steps process, and actually this can now be straight forward generalized to any

steps.

To conclude, we can keep subdivide our triangles, each one to 4 smaller triangles, and remove the middle one, and for each such configuration the orbits of our point will spend (up to some -error) the same amount of time in each triangle.

So now we know that if we fix a triangle size, the orbit spends the same amount of time in each triangle. The only shape that has this property is the Sierpinski triangle, so whatever the definition of “limits of random walks” is, the limit here should be the Sierpinski triangle.

2. The other examples

We saw that the random walk process implied that “its limit” should be “equality distributed” in our first three colored triangles, and then after the second step in the nine smaller triangles, then triangles,

triangles etc. Since the Sierpinski triangle satisfy this – it is a disjoint union of its three (respectively 9,27,…) smaller contractions – it must be the “limit”. We shall give a proper definition for the limit in a future post, but for now, let’s see if we can use our intuition to understand the rest of the example.

The square is a disjoint union of four smaller (by a factor of 2) squares near its vertices. Thus, just like the Sierpinski triangle, it must be the limit of our random process with 4 vertices.

On the other hand, for the pentagon, the 5 contractions are not disjoint, so we do not expect a “simple” picture as in the case of the triangle and square. Note how there seem to be more points where the smaller pentagons overlap.

When we switched to ratio , the contractions are smaller (by a factor of

), so it is easier for them to be disjoint. This is why we can nice and “simple” picture for up to 6 vertices.

Finally, when we rearranged the 8 vertices in a square shape, it corresponded to 8 disjoint contractions of a square, thus giving us a final image with smaller and smaller squares, very similar to what we got in the Sierpinski triangle.

All the images that we saw so far are call “self similar sets”, since they are similar to themselves after the corresponding contractions. You can now try running your own simulations with random walk and see what images you can come up with. In particular, when you start with a “nice” shape and where the contractions produce disjoint sets, the final image usually seem more interesting. In general these processes usually use more general map and not just contractions, and in particular they use translations and rotations. In a future post, where we will ask what is exactly the limit of a random process, we will discuss this more general setting.